OCAPE

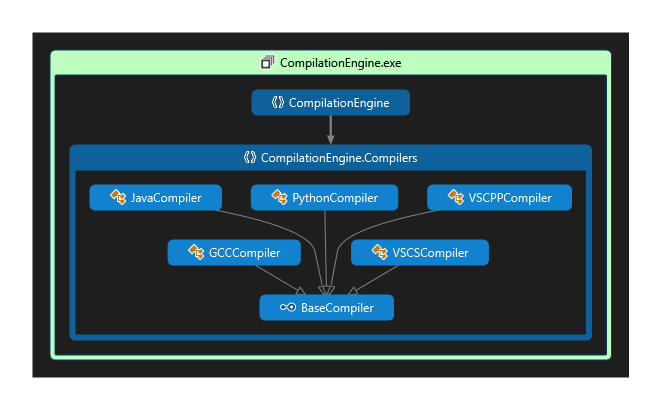

Software for managing, compiling, evaluating and reporting on programming implementations to various tasks. Supports C++, C#, Python and Java. Works on Windows and (with a limited feature-set) Linux distributions.

This was my MSc dissertation project. I am really proud of how well it turned out and how well it performs. The compilation and evaluation engines in particular could be of great use to various parties.

All the code is hosted on GitHub. An in-depth report can be found at this link.

Pitch

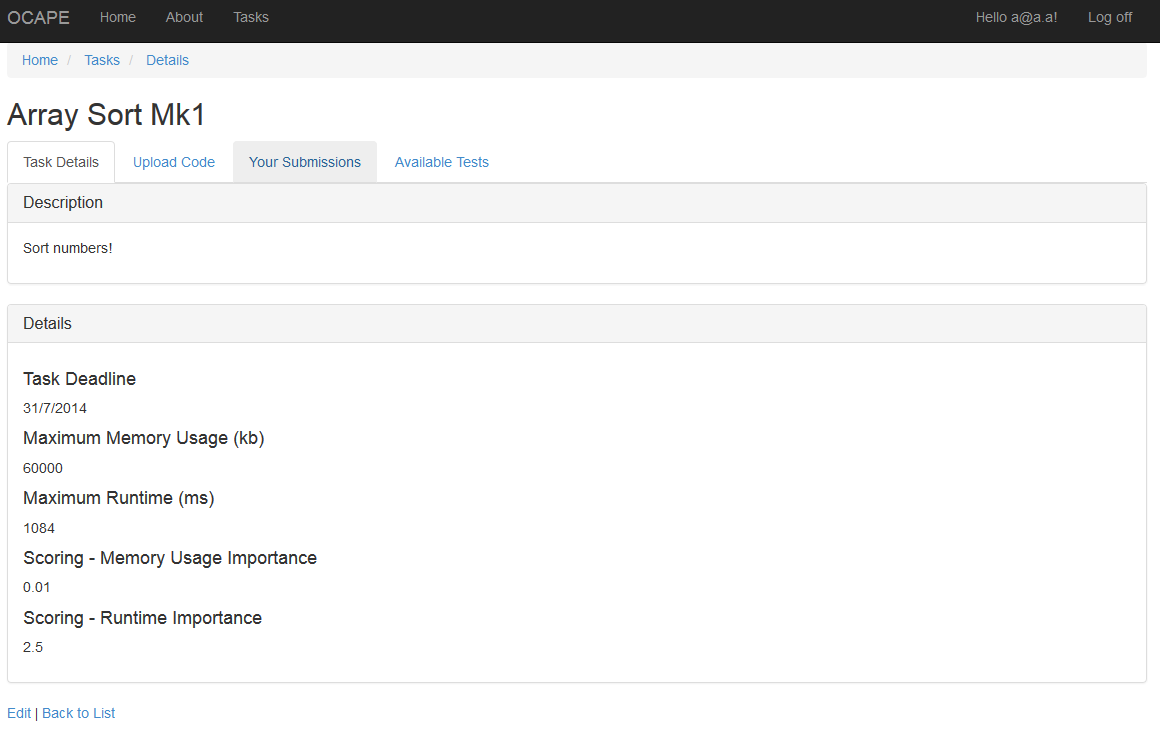

Educational institutions, such as universities or high schools, often times organise programming competitions for their students. Researchers are always on the lookout for faster, more accurate or more memory efficient solutions to a wide variety of programming tasks. The tools available for performance analysis and feedback for these needs are limited to development tools, which often times do not have much automation or support for accepting code from a crowd.

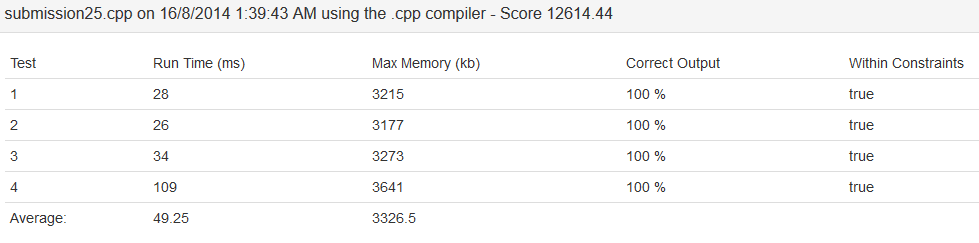

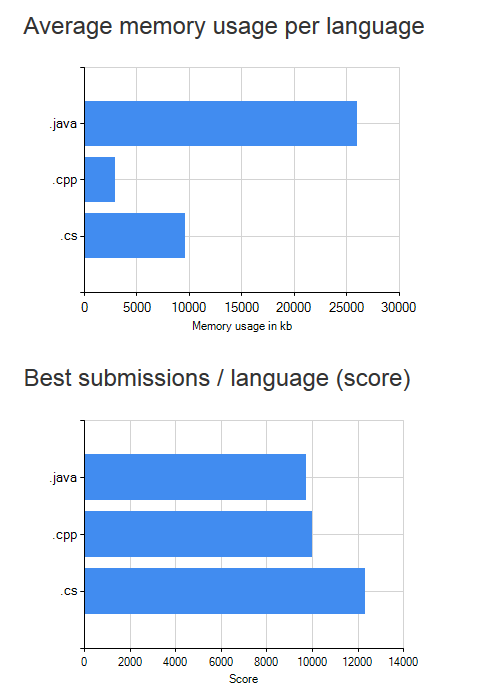

By creating a tool for automated compilation, test generation and performance report generation, researchers or competition organisers can focus on tweaking the tasks and rewarding great results, rather than dedicating a lot of time to testing every individual solution one by one. By being able to immediately compare the performance of two or more solutions, programmers could exchange code to further improve their solutions.

This project aims to create such a tool, to allow the submission, compilation and testing of code in a variety of programming languages against a given programming task’s requirements and constraints. It would offer the means for competition organisers to concentrate on the interesting tasks first, then simply accept submissions from the competitors and get the results in real time. Researchers would be able to maintain a database of solutions to their problems, with metrics on speed, accuracy and resource usage. All of this would be available on the web, with computation done server-side, for a great degree of mobility and stability.